RAG Pipeline Architecture

Give your LLM access to your data without fine-tuning. RAG bridges the gap between general-purpose language models and domain-specific knowledge.

When You Need This

You want to build an AI assistant that answers questions about your organization's documents — contracts, policies, knowledge bases, product documentation, medical records. Fine-tuning an LLM on your data is expensive, slow, and creates a model that's frozen at the point of training. You need an architecture where the LLM can access up-to-date, domain-specific information at query time, cite its sources, and avoid hallucinating facts that aren't in your documents. RAG (Retrieval-Augmented Generation) is how you get there.

Pattern Overview

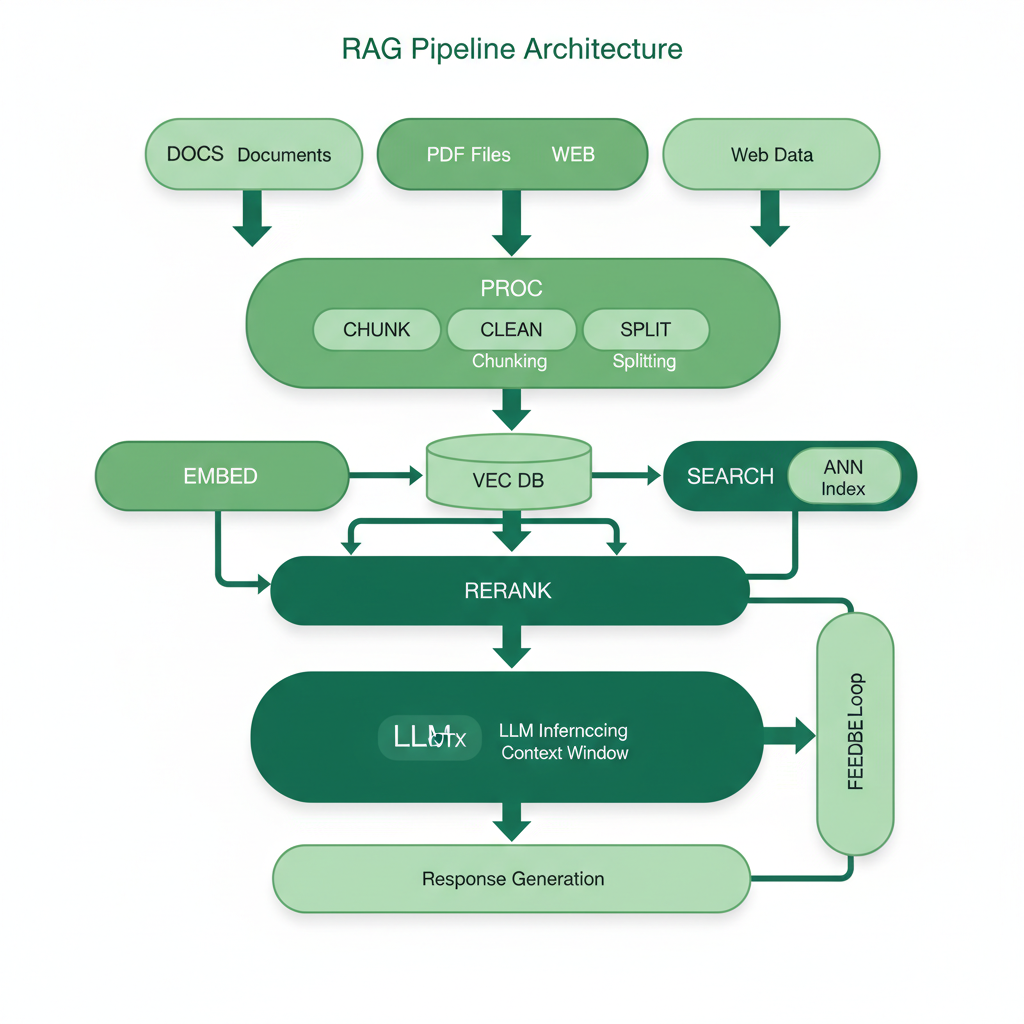

RAG augments LLM generation with retrieved context from a knowledge base. At query time, the system converts the user's question into an embedding, searches a vector database for semantically similar document chunks, and includes the most relevant chunks as context in the LLM prompt. This grounds the model's response in actual documents, enables source citation, and keeps the knowledge base updatable without retraining. A production RAG pipeline handles ingestion (parsing, chunking, embedding), retrieval (vector search, reranking, hybrid search), and generation (prompt construction, streaming, guardrails).

Reference Architecture

The architecture has two pipelines. The ingestion pipeline processes documents through parsing (PDF, DOCX, HTML extraction), chunking (semantic or fixed-size with overlap), embedding (via embedding model), and storage (vector database + document store). The query pipeline takes a user question, generates a query embedding, retrieves candidate chunks from the vector database, reranks them for relevance, constructs a prompt with the top chunks as context, and streams the LLM response with source citations.

- Document Ingestion Pipeline: Multi-format parser (Apache Tika, Unstructured, or custom) that extracts text from PDFs, DOCX, HTML, Markdown, and scanned images (OCR). Chunking strategy splits documents into retrievable units — MW defaults to semantic chunking (split at paragraph/section boundaries) with 512-token target size and 50-token overlap

- Embedding Service: Converts text chunks into vector embeddings. Uses models like OpenAI

text-embedding-3-large, Cohereembed-v4, or open-source alternatives (BGE, E5). Batch processing for ingestion, single-query processing for search - Vector Database: Stores embeddings with metadata for filtered search. Supports approximate nearest neighbor (ANN) search at scale. See Scalable Vector Database Architecture for production-scale considerations

- Retrieval & Reranking: Two-stage retrieval — fast ANN search returns top-50 candidates, then a cross-encoder reranker (Cohere Rerank, BGE Reranker, or ColBERT) scores each candidate against the query for precise relevance ranking. Top-5 chunks go to the LLM

- Hybrid Search: Combines vector (semantic) search with keyword (BM25) search. This catches cases where vector search misses exact terminology (product codes, legal clauses, medical terms) that keyword search handles well. Reciprocal rank fusion merges the two result sets

Design Decisions & Trade-offs

System Architecture Overview

Technology Choices

| Layer | Technologies |

|---|---|

| Document Parsing | Unstructured, Apache Tika, LlamaParse, Docling, custom OCR (Tesseract, AWS Textract) |

| Embedding | OpenAI text-embedding-3-large, Cohere embed-v4, BGE-M3, E5-large-v2 |

| Vector Database | Milvus, Pinecone, Qdrant, Weaviate, pgvector (for small-scale) |

| Keyword Search | Elasticsearch, OpenSearch, PostgreSQL full-text search |

| Reranking | Cohere Rerank, BGE Reranker, ColBERT v2, FlashRank |

| LLM | Claude (via AI Gateway), GPT-4, Gemini — provider-agnostic via AI SDK |

| Orchestration | LangChain, LlamaIndex, or custom pipeline (MW preference for production) |

When to Use / When to Avoid

| Use When | Avoid When |

|---|---|

| Users need answers grounded in your organization's specific documents | The knowledge base is < 50 pages — just put it in the system prompt |

| Documents are updated frequently and the AI needs current information | You need the model to learn a new skill/behavior, not access new facts (fine-tune instead) |

| Source citation and auditability are requirements (legal, compliance, healthcare) | The questions are purely conversational and don't require factual grounding |

| Multiple user groups need access to different document subsets (permission-filtered RAG) | You're building a creative writing tool where factual accuracy isn't the goal |

Our Approach

MW builds RAG pipelines from the retrieval quality outward — we benchmark retrieval precision before touching the LLM prompt. A RAG system with mediocre retrieval and a great LLM produces confident-sounding wrong answers. Our standard pipeline includes a retrieval evaluation harness: a set of test queries with known-relevant documents, measured by MRR@5 and NDCG@10. We iterate on chunking, embedding model, and reranking until retrieval metrics hit target thresholds before optimizing generation. We've built RAG systems across legal document review, healthcare knowledge bases, and multi-language customer support — and the common lesson is that retrieval quality accounts for 80% of answer quality.

Related Blueprints

- AI Customer Support Agent — RAG-powered support agent with knowledge base retrieval

- AI Document Processing Pipeline — Document ingestion, parsing, and AI-powered extraction

Related Industry Guides

- AI for Legal — RAG applications in contract review and legal research

Related Case Studies

- Document Intelligence — Local RAG pipeline for spreadsheet and document analysis

- AI Chat Platform — Multi-model chat with document retrieval and GDPR-compliant data handling

Related Architecture Patterns

Explore more design patterns and system architectures

Scalable Vector Database Architecture

Embedding search is easy at 10K vectors. At 100M vectors with sub-100ms P99, it's an infrastructure problem — and that's what this pattern solves.

AI/ML Pipeline Architecture

Models don't run themselves. The pipeline that trains, validates, deploys, and monitors your models is the actual product — the model is just one artifact.

Data-Intensive Platform Architecture

When your competitive advantage is in your data, the platform that collects, transforms, stores, and surfaces that data is the most important thing you'll build.

Need Help Implementing This Architecture?

Our architects can help design and build systems using this pattern for your specific requirements.

Get In Touch