Data-Intensive Platform Architecture

When your competitive advantage is in your data, the platform that collects, transforms, stores, and surfaces that data is the most important thing you'll build.

When You Need This

Your organization has data scattered across dozens of systems — CRM, ERP, billing, support tickets, sensor data, third-party APIs — and nobody can answer basic business questions without a week of manual data pulling. Reports are built in spreadsheets, analysts wait days for data engineering to prepare datasets, and the "single source of truth" is whichever database someone queried last. You need a data platform that ingests from all sources, transforms data into analysis-ready models, and serves insights to both dashboards and AI/ML systems. This isn't a data warehouse project — it's a platform that makes data a usable organizational asset.

Pattern Overview

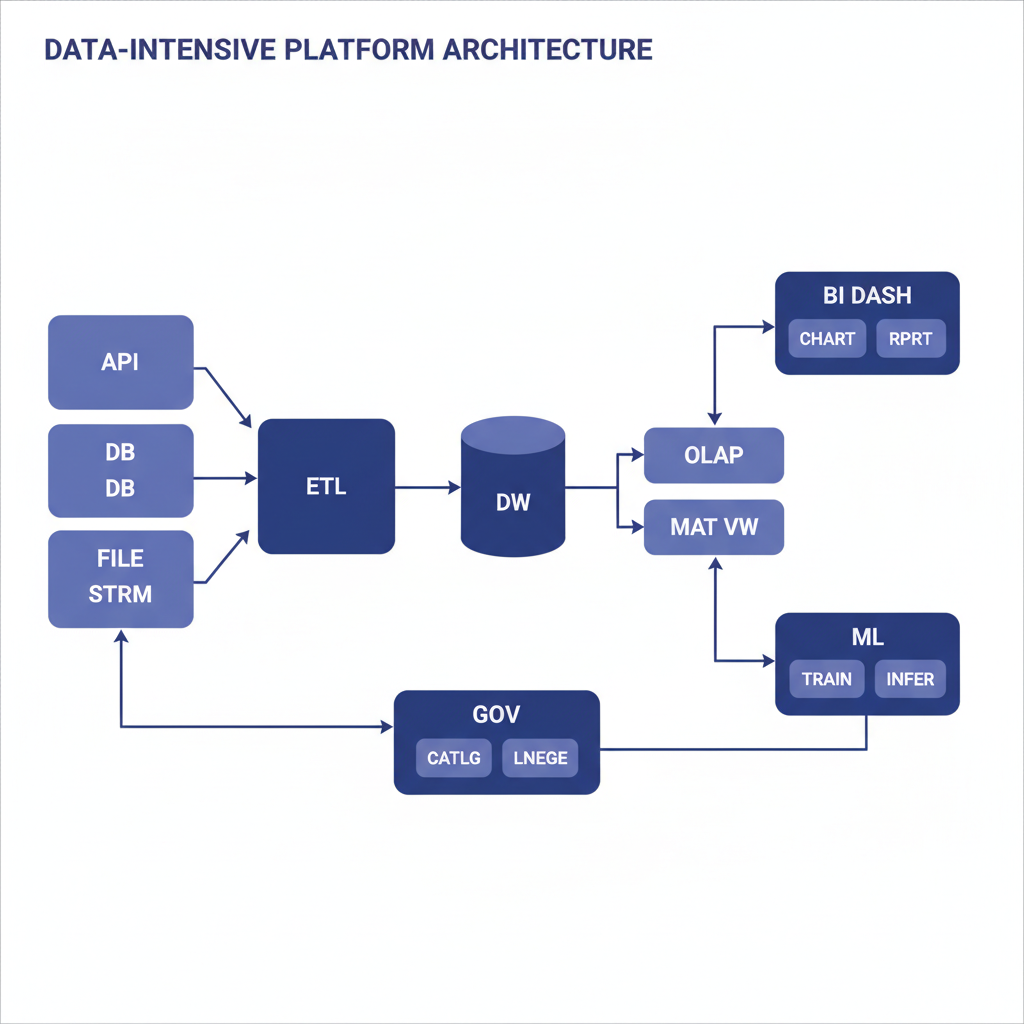

Data-intensive platform architecture creates a unified data infrastructure spanning ingestion, storage, transformation, and consumption. The ingestion layer pulls data from operational databases (CDC), APIs, event streams, and file uploads into a centralized data lake (raw, unprocessed). The transformation layer (dbt, Spark, or custom) cleans, models, and aggregates data into a data warehouse (structured, query-optimized). The consumption layer serves data to BI dashboards, API endpoints, ML feature stores, and embedded analytics. Data governance, lineage tracking, and access control operate across all layers.

Reference Architecture

Data flows through a medallion architecture: Bronze (raw ingestion), Silver (cleaned and conformed), Gold (business-ready aggregates). The Bronze layer stores raw data in Parquet format on S3/GCS, partitioned by source and ingestion timestamp — nothing is dropped, nothing is transformed. The Silver layer applies schema enforcement, deduplication, type casting, and joins across sources — this is where data becomes consistent. The Gold layer contains business-specific aggregates, denormalized tables, and pre-computed metrics optimized for specific use cases (dashboards, ML training, API serving).

- Ingestion Layer: CDC connectors (Debezium, Fivetran, Airbyte) for database sources. API extractors for SaaS tools (Salesforce, HubSpot, Stripe). Event stream consumers for real-time data (Kafka). File processors for batch uploads (CSV, Excel, API dumps). All ingestion is incremental where possible, full-refresh only when necessary

- Storage Layer: Object storage (S3/GCS) with Parquet/Delta Lake format for the data lake. Cloud data warehouse (Snowflake, BigQuery, Redshift) for structured querying. The data lake holds everything (cheap, durable); the warehouse holds curated data (fast, expensive). Iceberg or Delta Lake table format for ACID transactions on the lake

- Transformation Layer: dbt (data build tool) for SQL-based transformations — models are version-controlled, tested, and documented. Spark or Databricks for large-scale transformations that exceed SQL capabilities. Orchestrated by Airflow, Dagster, or Prefect with dependency-aware scheduling, automatic retries, and SLA monitoring

- Data Governance: Column-level lineage tracking (what source field became what warehouse column). Access control with row-level security and column masking for PII. Data quality checks (Great Expectations, dbt tests) that block bad data from reaching the Gold layer. A data catalog (DataHub, Atlan) for discoverability

Design Decisions & Trade-offs

System Architecture Overview

Technology Choices

| Layer | Technologies |

|---|---|

| Ingestion | Fivetran, Airbyte, Debezium, custom Python extractors, Kafka Connect |

| Storage | S3/GCS (Parquet, Delta Lake, Iceberg), Snowflake, BigQuery, Redshift |

| Transformation | dbt, Apache Spark, Databricks, pandas (small-scale) |

| Orchestration | Airflow, Dagster, Prefect, dbt Cloud |

| Governance | DataHub, Atlan, Great Expectations, dbt tests, Monte Carlo (observability) |

| Consumption | Metabase, Looker, Superset, embedded analytics APIs, ML feature stores |

When to Use / When to Avoid

| Use When | Avoid When |

|---|---|

| Data is scattered across 5+ systems and no one has a unified view | You have one database and one dashboard — a direct connection is sufficient |

| Multiple teams (analysts, data scientists, product) need access to the same data | The data volume is small (< 1GB) and doesn't justify platform overhead |

| Compliance requires data lineage, access control, and audit trails on data access | You're building a transactional application, not an analytics platform |

| ML/AI features need curated, feature-store-ready datasets | The organization doesn't have data engineering capacity to operate the platform |

Our Approach

MW builds data platforms with a "quick-wins-first" approach — we identify the 3-5 most painful data questions the organization can't currently answer, build the minimum pipeline to answer them, and expand from there. We don't start with a 6-month "build the data lake" project. Our dbt projects include comprehensive testing (uniqueness, not-null, referential integrity, custom business rules), documentation (every model and column described), and freshness monitoring. We've built data platforms processing 50M+ rows/day for healthcare auditing, inventory management, and financial reporting — and the consistent lesson is that data quality controls are the hardest and most important part.

Related Blueprints

- Intelligent Inventory Management System — Real-time inventory analytics from multi-source data

- Custom ERP for Manufacturing — Manufacturing data integration across production systems

- Supply Chain Visibility Platform — Cross-partner data aggregation and analytics

Related Case Studies

- Healthcare Auditing — Healthcare data auditing platform with compliance-grade lineage and access controls

- AI Accounting — Invoice OCR — Document extraction feeding into financial data pipelines

- Vendor Discovery — B2B supplier data aggregation with Elasticsearch-powered search

Related Architecture Patterns

Explore more design patterns and system architectures

Real-Time Streaming Systems

Batch is a special case of streaming. When your business needs to react in seconds instead of hours, you need an architecture built for continuous data flow.

Security-First Architecture

Security isn't a feature you add after launch. It's an architectural property — either the system was designed for it, or it wasn't.

Serverless-First Architecture

Pay for what you use, scale to zero when you don't, and stop managing servers entirely — but know when the economics stop working.

Need Help Implementing This Architecture?

Our architects can help design and build systems using this pattern for your specific requirements.

Get In Touch