On-Off Scaling Architecture

Don't pay for idle GPUs. Provision compute just-in-time, process the workload, and tear it down — turning capital expense into a per-job operating cost.

When You Need This

Your workload is bursty — video encoding jobs that spike when content is uploaded, ML training runs that need 8 GPUs for 4 hours then nothing, batch inference jobs triggered by business events, or rendering pipelines that run overnight. You're either over-provisioned (paying for idle resources 80% of the time) or under-provisioned (jobs queue for hours during peaks). You need an architecture that provisions exactly the compute you need, when you need it, and releases it when the job completes — without the cold-start penalty that makes "scale to zero" impractical for GPU workloads.

Pattern Overview

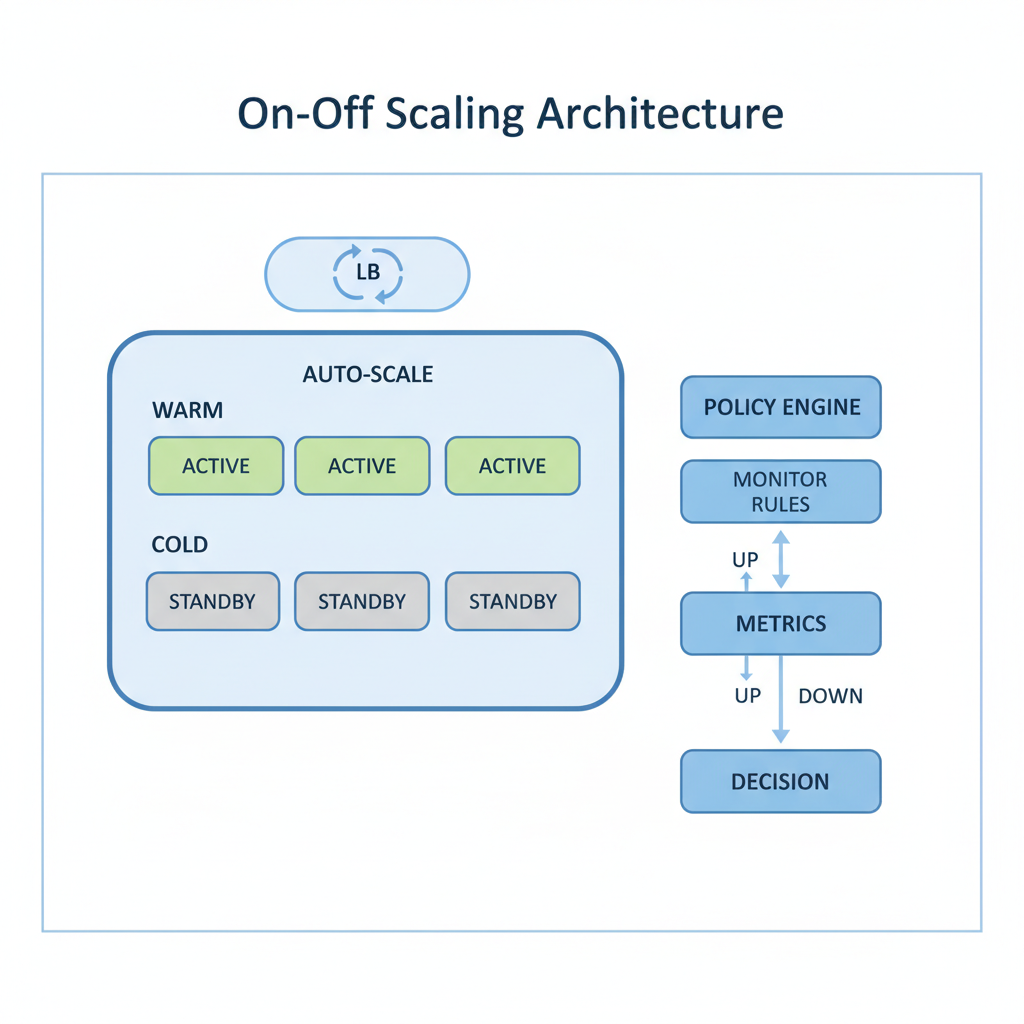

On-off scaling architecture manages compute resources through warm/cold pooling, job-queue-driven provisioning, and automated teardown. A warm pool maintains a small number of pre-initialized instances ready for immediate use. A cold pool provisions additional capacity from spot/preemptible instances when demand exceeds the warm pool. A job orchestrator routes work to available instances, monitors progress, handles retries on spot eviction, and triggers scale-down when the queue drains. The pattern is particularly critical for GPU workloads where cold start (container pull + model loading) can take 3-10 minutes.

Reference Architecture

The system centers on a job queue (SQS, Redis, or custom) that buffers incoming work requests. A scaling controller monitors queue depth and provisions instances from the warm pool first, then from the cold pool (spot instances). Each worker instance pulls jobs from the queue, executes the workload (encoding, training, inference), reports completion, and returns to the pool or terminates. A checkpoint manager handles spot eviction by saving intermediate state to S3, enabling jobs to resume on a different instance without starting over.

- Job Queue & Scheduler: Prioritized job queue with configurable concurrency limits per job type. Supports delayed execution, dead-letter queues for failed jobs, and priority lanes (express jobs get warm pool instances, standard jobs use cold pool). AWS SQS, BullMQ on Redis, or Temporal for complex workflows

- Warm Pool Manager: Maintains N pre-initialized instances with models loaded in GPU memory, containers running, and health checks passing. Instances cycle through: idle → assigned → processing → idle. Pool size is configurable by time-of-day (larger during business hours, smaller overnight) and adjustable based on historical demand patterns

- Cold Pool Provisioner: Provisions additional capacity from spot instances (AWS), preemptible VMs (GCP), or serverless GPU providers (RunPod, Modal, Salad). Handles spot interruption notifications by migrating jobs to available instances. Uses a diversified instance type strategy (multiple GPU types, multiple AZs) to maximize spot availability

- Checkpoint & Recovery: For long-running jobs (ML training, large video encoding), periodic checkpointing saves intermediate state to S3. On spot eviction, the job is re-queued and resumes from the last checkpoint. For short jobs (< 10 min), the cost of checkpointing exceeds the cost of restart — these jobs simply retry from scratch

Design Decisions & Trade-offs

System Architecture Overview

Technology Choices

| Layer | Technologies |

|---|---|

| Compute | AWS EC2 Spot (G5/P4), GCP Preemptible (A2/L4), RunPod Serverless, Modal |

| Orchestration | Kubernetes (Karpenter for autoscaling), AWS Batch, custom job orchestrator |

| Job Queue | AWS SQS, BullMQ (Redis), Temporal, Celery |

| Storage | S3 (checkpoints, model artifacts), NVMe (model cache), EFS (shared workspace) |

| Monitoring | CloudWatch/Prometheus (queue depth, instance utilization, job latency), custom cost dashboards |

When to Use / When to Avoid

| Use When | Avoid When |

|---|---|

| Workload is bursty — peak demand is 5x+ average demand | Traffic is steady and predictable — right-sized reserved instances are cheaper |

| GPU/high-compute jobs that are expensive when idle | The workload is lightweight CPU processing that fits serverless (Lambda) |

| Jobs can tolerate 1-5 minute cold start for cold pool provisioning | Sub-second job start latency is required — you need always-on infrastructure |

| Cost optimization is a primary concern and spot pricing offers 60-90% savings | Spot interruption would cause data loss that checkpointing can't mitigate |

Our Approach

MW designs on-off scaling with a "cost per job" lens — we model the total cost of processing one unit of work (one video, one training run, one batch inference) across different scaling strategies and pick the one that minimizes cost at the required latency SLA. Our implementations include real-time cost dashboards that show per-job cost, infrastructure utilization, and spot savings. We've built on-off GPU infrastructure that reduced video processing costs by 70% compared to reserved instances, and ML training clusters that provision 64 GPUs for a 4-hour training run and release them automatically.

Related Blueprints

- GPU Cluster Orchestration for AI Workloads — GPU provisioning and orchestration for ML training

- Real-Time AI Video Surveillance System — Burst inference for video analysis events

- Live Sports Highlight Generator — Event-driven video processing with burst compute

Related Case Studies

- On-Off Pattern Video Processing — GPU provisioning with warm/cold pools for video encoding workloads

- Video Encoding Platform — Serverless and spot-based encoding with autoscaled worker pools

Related Architecture Patterns

Explore more design patterns and system architectures

Security-First Architecture

Security isn't a feature you add after launch. It's an architectural property — either the system was designed for it, or it wasn't.

Serverless-First Architecture

Pay for what you use, scale to zero when you don't, and stop managing servers entirely — but know when the economics stop working.

Edge Computing & IoT Architecture

Process data where it's generated. Not everything needs to round-trip to the cloud — and for many IoT workloads, it can't.

Need Help Implementing This Architecture?

Our architects can help design and build systems using this pattern for your specific requirements.

Get In Touch